Senior Scala Developer

My main professional interests revolve around functional programming, designing and building software so the content of this blog will be mainly about Software Architecture and Functional Programming.

Senior Scala Developer

My main professional interests revolve around functional programming, designing and building software so the content of this blog will be mainly about Software Architecture and Functional Programming.

Recently I experimented with Scala 3, ZIO 2, zhttp, zio-json and Laminar. You can see the proof of concept here: https://github.com/adrianfilip/reservation-booker.

I made the following setup:

I think you probably are more interested in the code but I want to leave you with a few of the things I found interesting and a few highlights:

I no longer had to default to supertypes at lower levels and loose precision. This means more info about how something can fail at all levels.

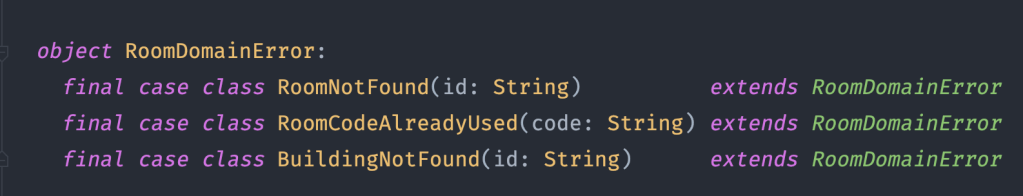

Notice that in the RoomRepository update operation I can use a subset of the RoomDomainError.

In the frontend we can consider the pages/subpages as the domains and define boundaries around them. How would that be done?

My approach was to juggle with several busses:

Let’s say we have the following structure for events:

The application event bus would support Event above meaning all types of events.

The page event bus for MyReservations would support only MyResevationsPageEvents because in the MyReservations page we don’t really care about other domains.

We can actually go even further and narrow what events are supported at a component level if we think the page is too broad for our context.

We can do that by restricting the types of events that go in and come out of the MakeReservation component that is in the MyReservation page. As you can see in the picture below this restriction is basically a filtering of what can be consumed from and published to the app event bus.

I did not see a way to turn an EventBus[A] into an EventBus[B] directly so I made an EventStream and an Observer to cover consuming and producing (using map & contramap does the trick here). In practice it amounts to the same thing as the EventStream + Observer used together form basically an event bus.

At the component level we can add a component (or local) event bus for dealing with events returned by our backend calls and also any other local events we may define.

Here is how it can look like for MakeReservation component.

That is a lot of event types (we could make it look nicer if we wanted to. I see it as an advantage that we can defer the decision of how to structure the types until the solution is clearer). But what is happening there?

We defined an event bus that we can use for:

That’s a lot of text about event busses but nothing about handlers so let’s catch up but in reverse order of the presented busses this time.

Handlers correspond to each bus type.

I am using io.laminext.fetch as http client.

Http calls to the backend result in json responses when successful and for certain errors and in some situations (malformed response, network connectivity, …) in failures we have to work a bit extra to mould the response into something we can use.

You may have noticed above the ServiceErrors and SecurityErrors event types besides more intuitive ones like GetAllBuildingsResponse. In fact the entire frontend only works with event types defined in the Event sum type.

What I did was to convert every response either into the type I am expecting or into a ServiceError or a SecurityError. This is done by using the custom made makeRequest[B, A, E] operations like below.

My implementation can be found in the unfortunately named HttpClient – https://github.com/adrianfilip/reservation-booker/blob/master/booker-ui/src/main/scala/com/adrianfilip/booker/ui/services/HttpClient.scala.

It’s just easy to work with so I almost always end up using it for stubs/mocks.

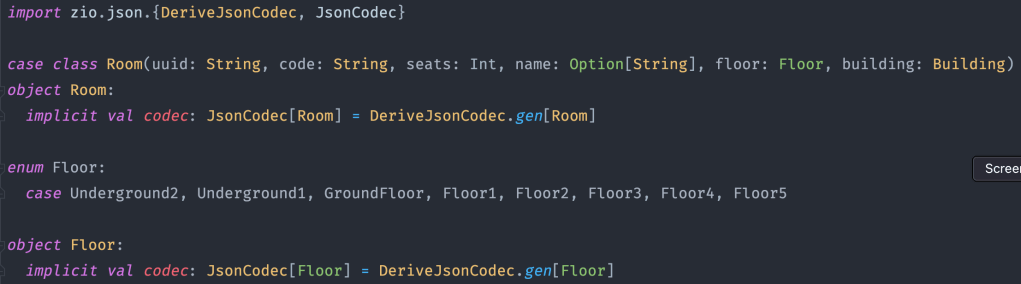

Being able to have shared domains is convenient but having to parse them in different ways is not. zio-json is a great fit for both backend and frontend use cases.

Here is an example of a case class with a Scala 3 enum for which codecs are made with zio-json.

This type is in a shared module (jvm, js) and can be used as is in both frontend and backend. Pretty straightforward.

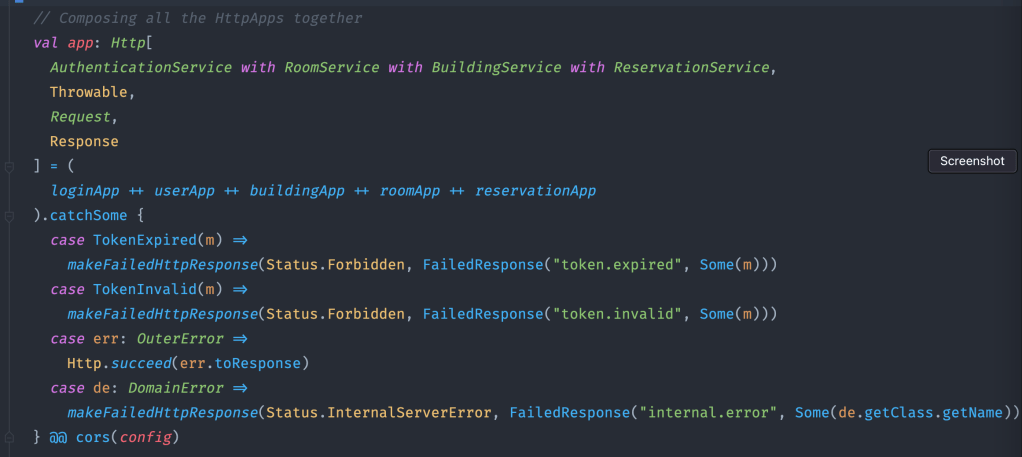

The composition is powerful on its own on top of that we do something like compose apps that we allow to fail in ways we then handle only once at the higher level.

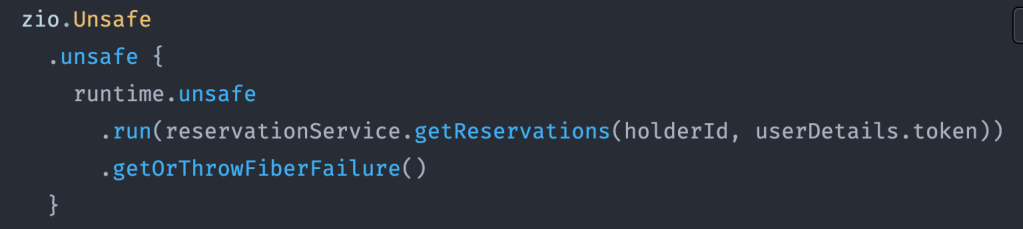

In the frontend app effects have to be evaluated to interact with the backend. In ZIO 2 this is done using zio.Unsafe like below.

I could not find a Laminar datepicker so I made one. You can find it in the project here: https://github.com/adrianfilip/reservation-booker/blob/master/booker-ui/src/main/scala/com/adrianfilip/components/datepicker/Datepicker.scala

Linking the proof of concept again so you don’t have to scroll back up: https://github.com/adrianfilip/reservation-booker

You can find me on:

EDIT: zio-properties 1.0 is now available on Maven Central

“com.adrianfilip” %% “zio-properties” % “1.0″

I like versatility when configuring application properties.

For instance: In Kubernetes I use environment variables, locally or in a local docker container I may use property files, environment properties, command line arguments, system properties or a mix of any of them.

Also there are many benefits to a simple and easy way of loading properties applicable on multiple use cases.

Versatility and simplicity in this case can be reduced to:

In order to achieve that I have built a library called zio-properties (on top of zio-config and magnolia) that checks multiple sources and retrieves properties based on a standard resolution order.

With one line of code you can now create a ZLayer that loads your properties from 5 default sources.

where AppProperties is the case class for your properties.

For this example let’s define it as:

The property sources used by zio-properties are (in the order of their resolution):

zio-properties will look in the property sources based on resolution order and will use the value from the first place where it finds it.

For instance (using the above AppProperties) for the scenario where

application.properties: db.port=3306

and Environment variables: db_port=6000

and no mention of the property anywhere else

results in zio-properties using 6000 because Environment variables are ahead of Property files in the resolution order.

How can you use it?

As you can see in a few lines of code you have created your AppProperties and are ready to use it in your application. Also because the AppProperties are provided from a Layer you can specify that as the R in ZIO[R, E, A] to your effects to avoid of passing them as parameters. That looks like this:

You can find the entire zio-properties project (with example and tests) on my Github: https://github.com/adrianfilip/zio-properties.

I recommend you also check out zio-config (@afsalt2, @jdegoes) and magnolia (@propensive).

EDIT: Now it also supports HOCON.

EDIT2: Available now on maven central: “com.adrianfilip” %% “zio-properties” % “1.0″

You can find me on:

Several years ago, I was developing an application that dealt with money. It handled loans, deposits, monthly payments, and reports. Unlike other apps, where eventual consistency and stale data may not be an issue, here one slip could lead to financial ruin for the company.

Computing the distribution of a client payment depended on a huge number of factors, including the accounts, the current customer rank, the current personalized interests established with the company, the current global rates, the client loan status, and sometimes other factors!

I was terrified just contemplating the ways in which the application could go wrong, most due to race conditions:

There’s a potential for many things to go wrong, including the dreaded double-spend!

If you’ve read my Scale Aware Architecture article, you may remember that I mentioned my solution to this problem in Kotlin, Spring Reactor, and Arrow: a novel MultiLaneSequencer concurrency structure, designed precisely to solve my problem.

The MultiLaneSequencer allows you to enforce at runtime the order in which all received requests are processed, with user-specified guarantees on what is permitted to be concurrent, and what is required to be sequential.

MultiLaneSequencer allows us to handle concurrent and sequential requests across different lanes.

Given the following lanes and requests:

Lanes: 1 2 3 4

t0 Request 1: X X

t1 Request:2 X

t2 Request 3: X X

t3 Request 4: X X

where t0 < t2 < t3 < t4

The order in which the requests above are processed is:

This requires tricky logic to get right, with severe consequences for any bugs—bugs that will themselves be tricky to find!

In the rest of the article, I will show you the Kotlin solution I came up with at that time, and then compare it with the Scala + ZIO solution I have since switched to.

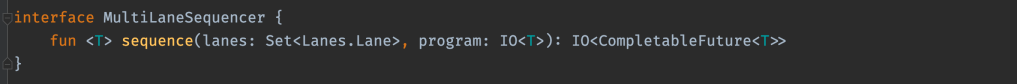

First of all, my external API would be a function that has as input parameters a Set<Lane> and a program (IO<A>) and returns an IO<CompletableFuture<A>> which is a description of a program that does the same thing but this time it does it in a laning context. I return a program that describes an async effect in a laning context.

The external API looks like this:

After some false starts and throwaway code, I came up with the following solution:

Based on this description, I created a model for a Message sum type:

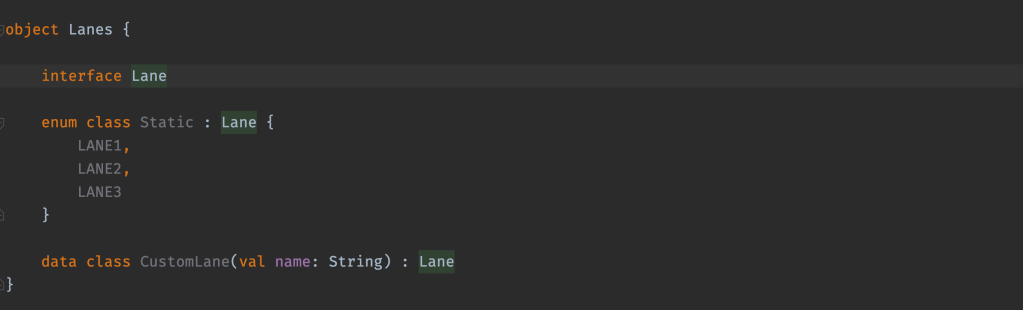

Then I created a sum type for Lane:

Notice here that you can define lanes at whatever granularity you want. For instance: One lane can be CLIENTS so all operations on clients are sequential, but it can also be for a specific Client “data class CustomLane(val name: String): Lane” so you can have multiple operations that even though if they are on the same client should be sequential, they can be performed in parallel for different clients.

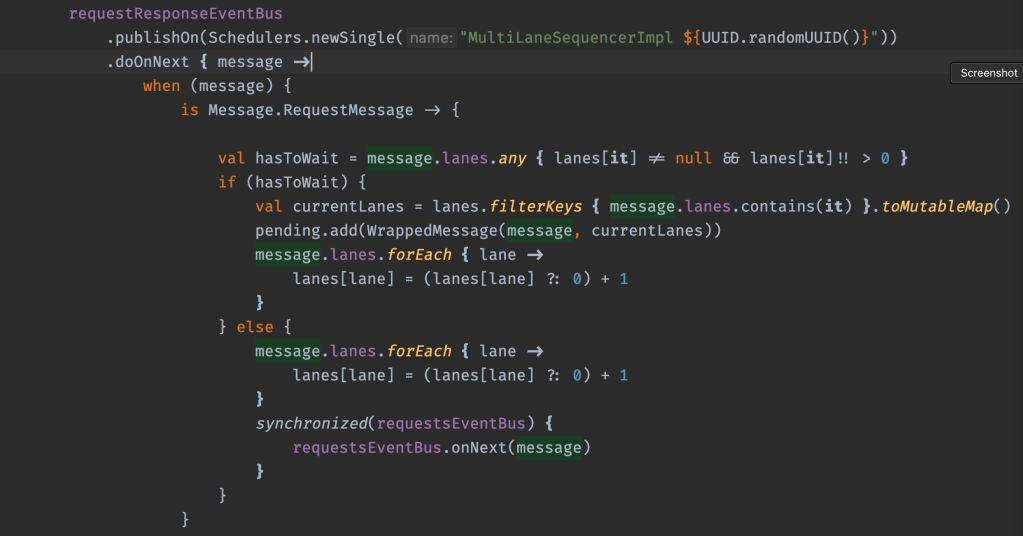

Now that I have the event bus and a functional data model, let’s see what the implementation looks like.

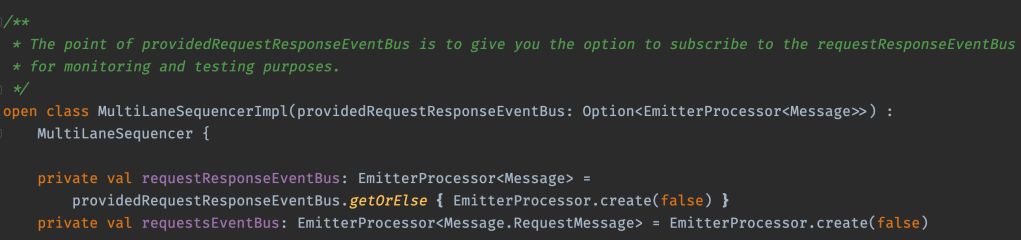

I use two event buses, one for request/responses, and one for requests, with an option for testing:

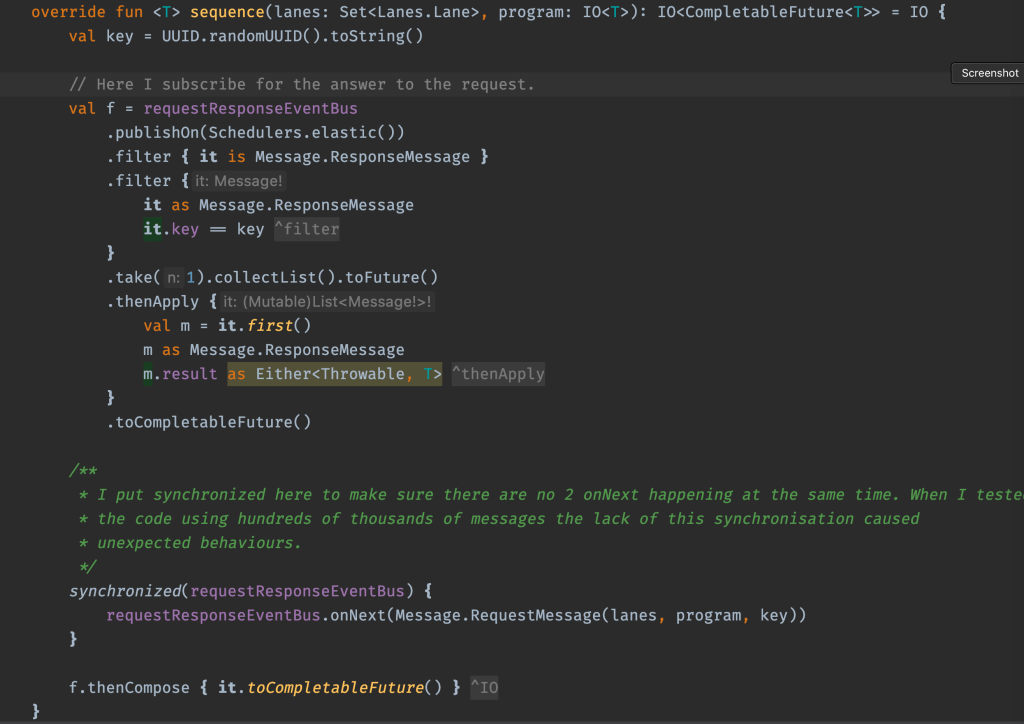

The sequence implementation is implemented as follows:

I subscribe to the requestResponseEventBus bus for the result. Then I publish the request to it. Note that subscribe – publish must be done in this precise order, otherwise the result may already be provided by the time the subscriber is initialised!

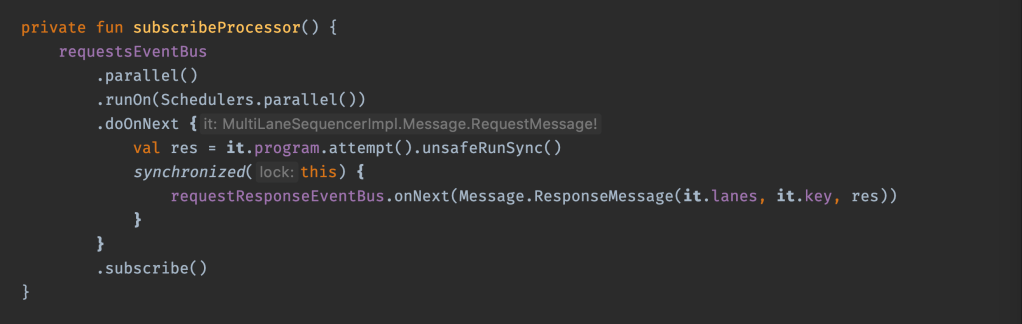

That was easy enough. How about subcribeProcessor, the parallel consumer of requestsEventBus, which executes the program in the consumed request, and then publishes the RequestResponse in the requestResponseEventBus?

This one is implemented as follows:

The sequential consumer is implemented as follows:

Where:

Testing the Kotlin Solution

The trickiest part of the Kotlin solution was figuring out how to test it—creating a realistic test environment and a correct check. Considering the nature of the problem, the test would need to check that the responses distribution and order on the lanes matches the requests distribution and respects their global ordering.

Think about it like the horseshoe game: If I throw blue, red, and then yellow, then when I check the pike, I should only see blue, red, yellow—any other combination would represent a failure.

At the time, I tested the code with several orders of magnitude more concurrency than expected real world usage. I did not push further, but there’s no reason why this wouldn’t hold for more concurrency.

The tests I developed, along with the implementation, can be found in the Github repository, also linked at the end of this article.

Until recently, Kotlin + Spring + Reactor + Arrow seemed unbeatable as my “go to” choice for creating new applications. That stack has a great language (Kotlin), has the tooling (IntelliJ), has a powerful library for creating reactive applications (Spring Reactor), has an answer for functional programming (Arrow) and has a great and big community behind it. You can trust it.

Then in 2018, I noticed the IO[E, A] effect from John De Goes. Over time, this effect turned into ZIO[R,E,A] and a great community grew around the data type, along with rapid development of an ecosystem.

About a year ago, I switched to ZIO for new projects. Since then, I have looked at some of my old projects, and migrating some of them to Scala + ZIO.

One of those is the MultiLaneSequencer construct.

As you may have noticed, the Kotlin + Spring Reactor + Arrow solution is not necessarily easy or simple. Also as you can see, it’s not fully functional, which limits composability and hampers testability.

Could Scala + ZIO provide a simple and pure functional version?

Let’s find out!

ZIO provides a data type called “ZIO[R, E, A]” that represents a whole asynchronous, concurrent workflow, which can be run in some environment of type R, might error with some value of type E, and will (hopefully) succeed with a value of type A.

Values with this type are called ZIO effects, and ZIO effects compose in a type safe fashion with other ZIO effects, allowing us to build up big programs out of simple pieces.

ZIO is built on next-generation asynchronous fibers, which allow high-performance and high scalability, without any blocking. ZIO is also packed with data structures that make it easier to build concurrent applications, like async queues, semaphores, and promises.

The most powerful tool in ZIO for building concurrent structures is STM. STM, which stands for Software Transactional Memory, allows building up transactions over shared state. Different fibers can commit different transactions to the same shared state at the same time, and ZIO ensures they are executed with the “ACID” guarantees that databases provide (but without the ‘D’, “durability”).

Because STM is composable and purely functional, it means you can build up larger concurrent structures from smaller ones. Because STM is declarative, it means you never need to use locks or other low-level primitives that are deadlock prone. All STM code is automatically purely asynchronous, and can be safely canceled for timeout purposes.

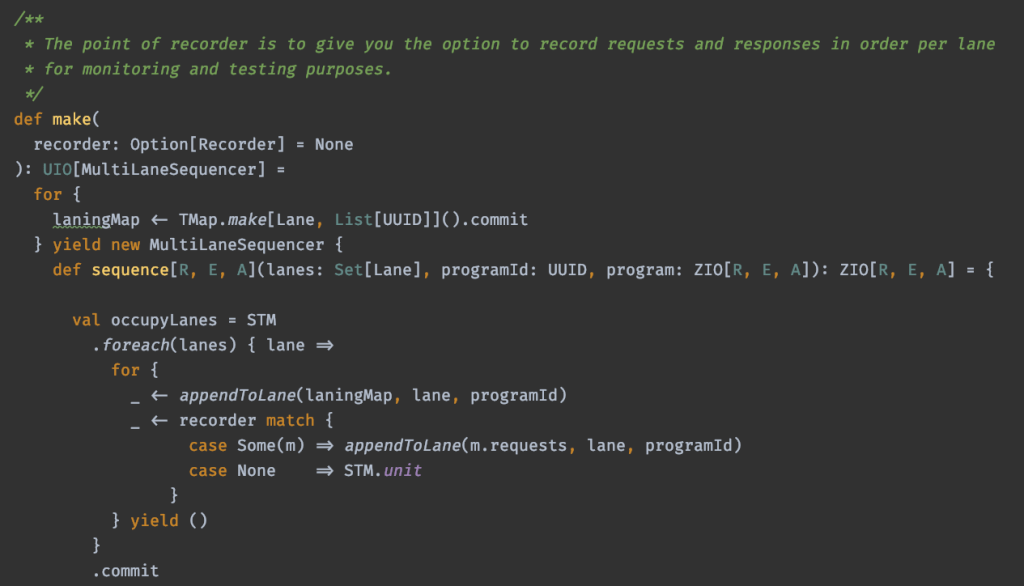

What would a solution that uses STM look like?

For Lane, there wouldn’t be much of a difference besides the syntax that Scala requires for constructing sum types:

The API for the MultiLaneSequencer still defines an operation that receives a set of Lanes, but the effect being executed and returned is now a ZIO effect:

Note that randomness in generating a globally-unique identifier (a side effect) is also removed by passing the effect id as a parameter:

The implementation I came up with relies heavily on the transactional guarantees of STM:

There you have it, the full solution in Scala + ZIO—just a few lines of declarative and type-safe code.

I couldn’t believe the ZIO STM solution is this straightforward!

The test for the ZIO solution flowed naturally from the implementation. I was surprised by how composable testing can be and how much control you could have over all aspects, including passage of time.

Unlike the Kotlin+Spring Reactor+Arrow tests, the solution where I have control of effects to this high of a degree made reasoning and testing much easier for me in Scala + ZIO.

A cool thing about the composability of STM and functional programming is that if you rearrange the pieces or remove one, you can still build something useful!

For instance, if I remove the sequentiality part from MultiLaneSequencer, I will have a new construct, let’s call it MultiLaneLocker, which allows me to control concurrent execution based on lanes, but provides no global ordering guarantees.

Practically speaking, this means that given the following situation:

L – lane

P – program

L1 L2 L3 L4

t0 P1 X X

t1 P2 X

t2 P3 X X

t3 P4 X X

t0 < t1 < t2 < t3

MultiLaneLocker guarantees that:

– P1 – P2 – P4 will run one at a time

– P3 – P4 will run one at a time

but it makes no guarantees about the order in which they run.

This solution is just a subset of the first solution:

When it comes to solving real world tricky concurrency problems, there is no doubt Kotlin + Spring Reactor + Arrow allows you to build asynchronous and functional solutions.

Yet it is also clear from this example that the Scala + ZIO solution is way simpler and was written faster. The Scala + ZIO solution is easier to test, easier to compose, and easier to understand, and can be quickly tweaked to generate new variations for changing requirements.

Next time I need to solve a tricky problem, I’ll reach for Scala + ZIO, because the cost of solving these problems is much lower. I recommend any readers who have concurrency challenges on the JVM to check out ZIO STM before using what you already know.

You can find the code & tests for both Scala and Kotlin solutions on my GitHub:

Thanks John De Goes and Adam Fraser for greatly accelerating my understanding of ZIO STM and ZIO Test.

You can also find me on:

How would one coming from a Spring background get their bearings fast with FP & ZIO?

The answer is below.

I onboard new team members coming from Java + Spring backgrounds to Scala + ZIO by starting with a 1-2 day training session where I present the main functional concepts they will work with. 1-2 days is enough to cover the basics and have someone at a level where they can begin to contribute to an existing codebase.

Even though people are very receptive, understanding how that translates to a regular project is not always a straight line. Showing them how it is done on complex projects or ones where they are unfamiliar with the domain sometimes diverts focus from the main point.

One of the reasons a framework like Spring is highly successful is because it provides clear simple examples for what it has to offer.

So why not provide a simple example of a Scala + ZIO setup for a regular scenario most people are familiar with?

A regular blog post would give you a Pet CRUD. But my readers deserve the best, this will be an Employee CRUD. 🙂

Let’s make a CRUD for an Employee using Scala + ZIO and see how it looks like.

I will use the following DDD based directory structure that should be familiar enough to most.

Let’s start with the model. The Employee will look like this:

Notice the use of the smart constructor to forbid creation of invalid state.

Next we have the EmployeeRepository.

Notice the use of ZIO[R, E, A] here. The short version description here is:

ZIO[R, E, A] describes a program where:

R – is the type of the environment needed to run the program (tldr: R = the dependency)

E – is the type of the failure the program can fail with

A – is the result of running the program successfully

This setup may look a bit verbose but it’s worth it.

Q: What happens here ZIO.accessM(_.get.save(employee)) for instance?

A: ZIO.accessM is used to access the provided environment. So you can read the above as: Give me the provided EmployeeRepository.Service and call its save() method.

Q: Where is EmployeeRepository provided and who provides it?

A: Any client that wants to use the save program ZIO[EmployeeRepository, PersistenceFailure,Employee] has to provide it when it uses it.

Now that we have this we can move on to the API. Considering my business logic will always be the same here and only the context (environment) may change I can use an object where I describe the business of each operation like this:

Notice here that:

Next we have the Controller. Because I wanted to keep things simple the user will interact with the app via the console. In the controller operations I implement the interaction with the user.

There are 2 things to notice here:

How about running this whole thing?

First I create a program that looks like this to describe how the high level interactions with the user will go and it also acts as a dispatcher from input to each Controller operation:

You can notice here:

What is that ApplicationEnvironment? That is my alias for the required environments to run this app. (See picture below)

Up to this point I only described programs and how they compose.

In order to actually run them I will need a Console and an EmployeeRepository. Console is provided by zio and for EmployeeRepository I have a custom in memory implementation which I use to create the localApplicationEnvironment Layer by composing it with other environments (like console).

And how do I provide all this to my main program? Like in the picture below.

Notice here that:

And with this we have a full Employee CRUD controlled by a CLI implemented only with Scala + ZIO.

Notice that:

– you can create fully composable software with ZIO

– you don’t need dependency injection

Hope this answers some of the questions regarding the transition to FP with ZIO.

The entire project is available at https://github.com/adrianfilip/zio-crud-sample, feel free to clone the repo, run the app and play with it.

You can find me https://twitter.com/realAdrianFilip and https://www.linkedin.com/in/adrianfilip/.

Extra mentions:

My name is Adrian Filip and I have been a software developer since 2007.

Sometime in between then and now I was working on a banking like app using Kotlin, SpringBoot and Arrow.

Everything was going well but yet I was finding it difficult to express some scale aspects without either mucking up my business a bit or trading away some composability by using infrastructure layer more. (See my previous post Why modularity? to understand why I abhor lack of composition in designs & implementations).

As a result I took it upon myself to improve the DDD model* by adding a layer that is all about scale concerns and keeping the business and infrastructure layers untouched by this scale corruption**. (If you want to learn more about DDD I highly recommend to go to the source Vaughn Vernon’s books.) If you are familiar with DDD and from what I wrote so far you might have guessed it that I’m in the Onion Architecture camp. (Don’t be fooled by the name, unlike the vegetable, in this case not using it will make you cry.)

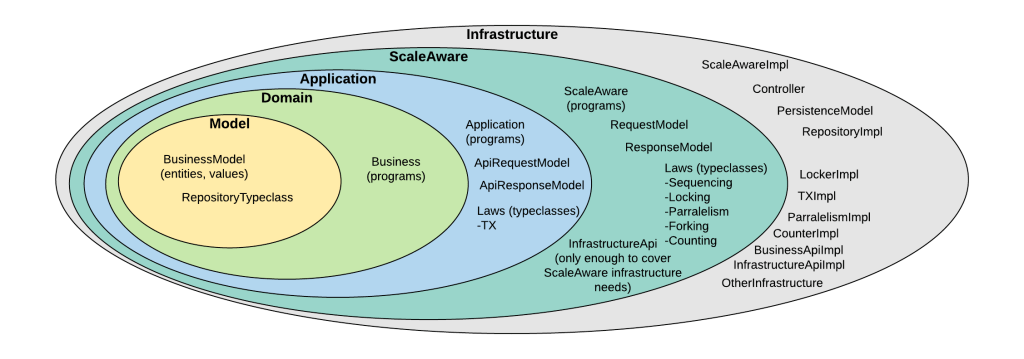

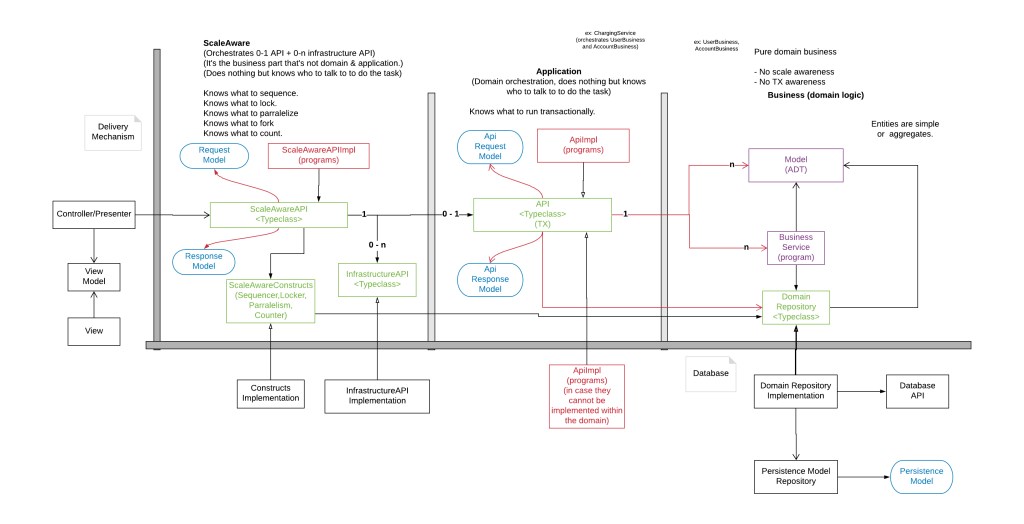

How does this new Onion looks like? Something like this.

Where you see the term program it means the description of a program. Remember that we want composability so we are working with descriptions of programs, which are values.

Why did I add that new layer for that application? Maybe the next picture will clarify it a bit.

(NOTE: ScaleAwareAPI, API, InfrastructureAPI and DomainRepository are traits only, not typeclasses – will update the picture soon)

I added the ScaleAware layer because I wanted:

There are some basic guidelines (read as mandatory rules) associated with this model:

(Example of an infrastructure service:A BackupService interface with a method called backup (the interface just has that operation and the impl will be the one that handles the details of what that actually means for this app. So the scale aware concern of creating a backup can be defined at the scale aware level via the interface. ) The impl can backup a nosql or a rdbms or a file and can do it in a whatever infrastructurally decided way. But this step can still be encoded in the scale aware instructions. It’s just that how it is implemented is pushed to the infrastructure layer, outside of scale aware’s clean api.)

But the actual power of the ScaleAware layer is given by the constructs that it uses. For example:

The Laning constructor provides a way to sequence the execution of whatever programs you want based on a dynamic definition of the “lanes” it needs open to run.

An analogy that would describe it is:

Imagine it like you have a highway with n lanes and each car is magic and can somehow use whatever1 or more lanes it wants at the same time. But they can only pass the toll booth only if all the lanes they use are free.

Lanes: 1 2 3 4 5

Car 1: x x

Car2: x

Car 3: x x

Car 4: x x

The way the cars above pass the toll booth is:

– Car 1 and Car 2 reach the toll booth because their lanes are free

– Car 2 is queued up behind Car 1

– Car 4 is queued up behind cars 2 and 3, so until they both pass the booth it just has to wait.

The biggest increase in productivity on this project came when I switched it to FP. The next boost was defining the scale aware architecture. Using Arrow FP + ScaleAware made the cost of maintenance and developing new features drop by a lot.

But that was then. Since then I noticed that the Scala world did not stop innovating despite the great flame wars of the 2010s***. One of the results of that innovation is a library called ZIO.

I have been using ZIO almost exclusively for about a year now and I am so impressed by it that I really want to see how my ScaleAware project would look like implemented in ZIO.

I think I will start the migration by comparing the implementations of one of my constructs between:

Arrow + Kotlin + Reactor + Future vs ZIO + Scala.

Place your bets!

* No DDD models were harmed in the design of the ScaleAware architecture.

**I sometimes use hyperbole. Not here, but I sometimes do.

*** Many were raised to Olympus (went to Haskell, some say they still describe how to drink nectar but never do it), some deserted (to Kotlin), I strategically retreated to Kotlin (next question please) and others started raising llamas or smth